How Effective Altruists Use Threats and Harassment to Silence Their Critics

How does the Effective Altruist community respond to critics? With threats and harassment. Even people who still call themselves "Effective Altruists" are afraid to openly criticize the community.

In a previous newsletter article, I briefly mentioned that Karen Hao made a mistake in her otherwise excellent book Empire of AI, which examines the rise of OpenAI and CEO Sam Altman’s messianic mission to build God-like superintelligence. Basically, Hao used a government document that included the wrong units. Consequently, Hao’s estimate for how much more water an AI datacenter uses compared to the city of Cerrillos, Chile, was off by a factor of 1,000. Whoops!

But mistakes happen. Even the most punctilious author is going to slip up at some point, because (of course) we’re all fallible. In my personal opinion, what matters just as much as avoiding mistakes in the first place is responding well to mistakes being pointed out. And Hao has handled the situation extremely well, I think. She immediately acknowledged the error, thanked the person who discovered it, and is making arrangements with her editor to rectify the situation. She also reiterated that her general point remains: AI uses up a lot of water.

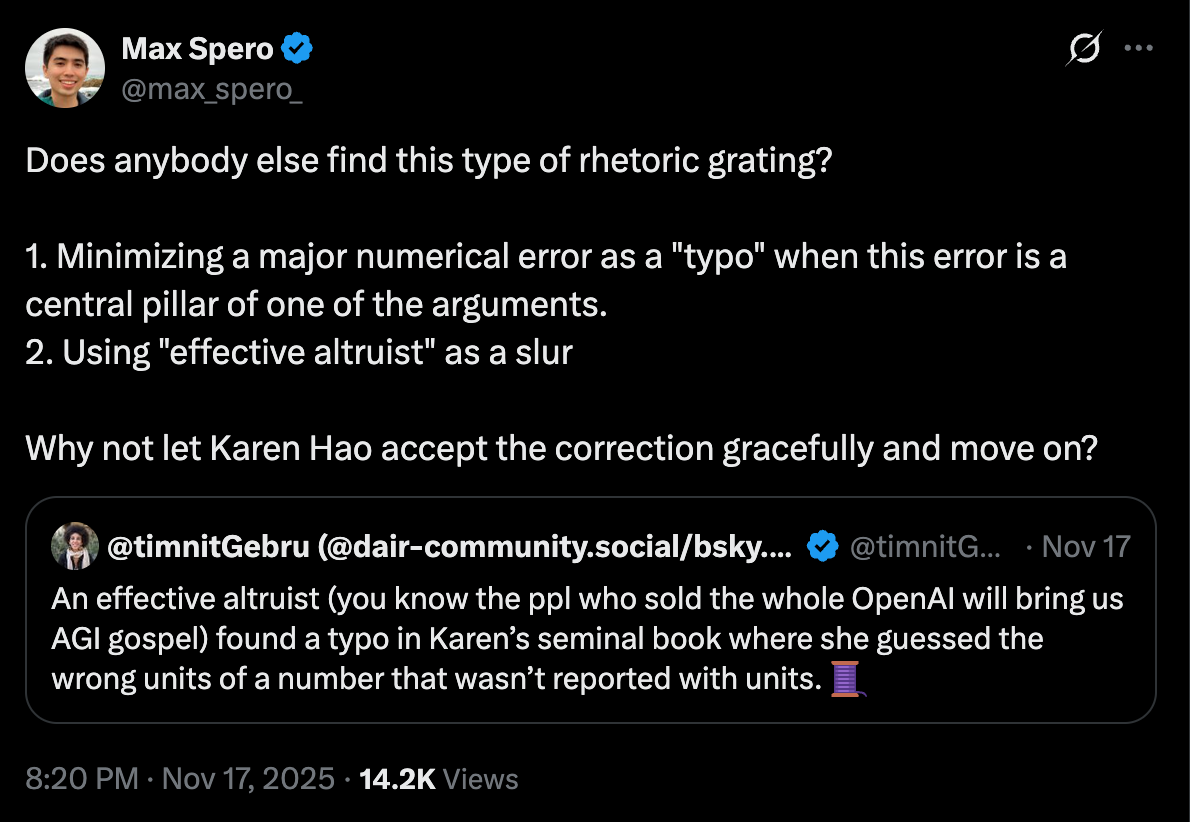

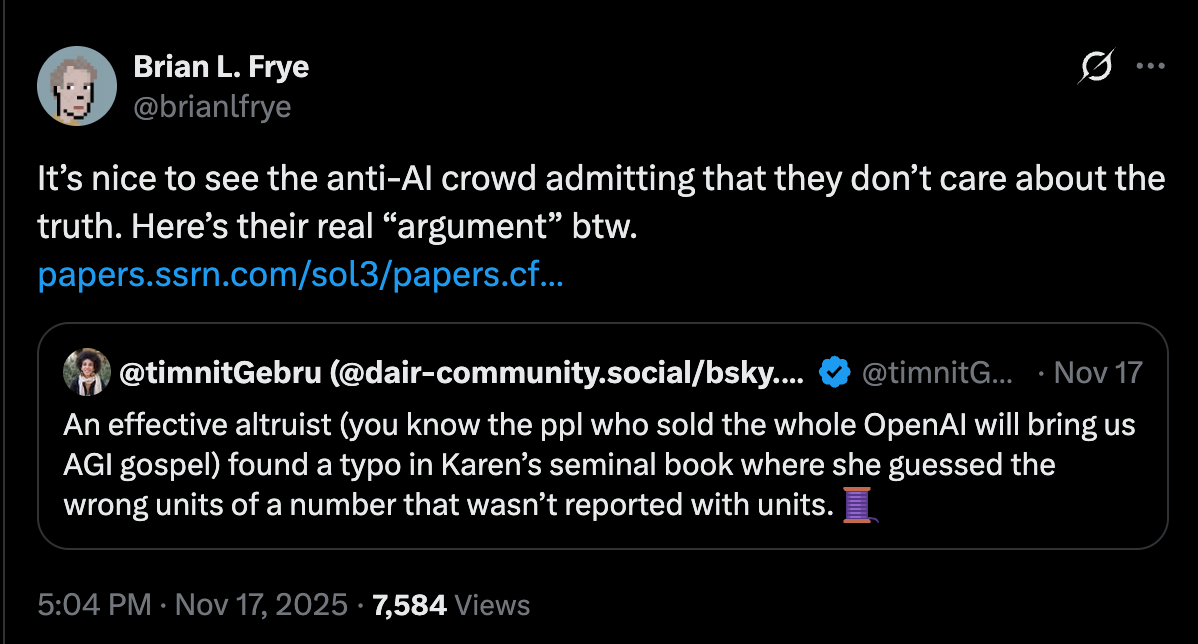

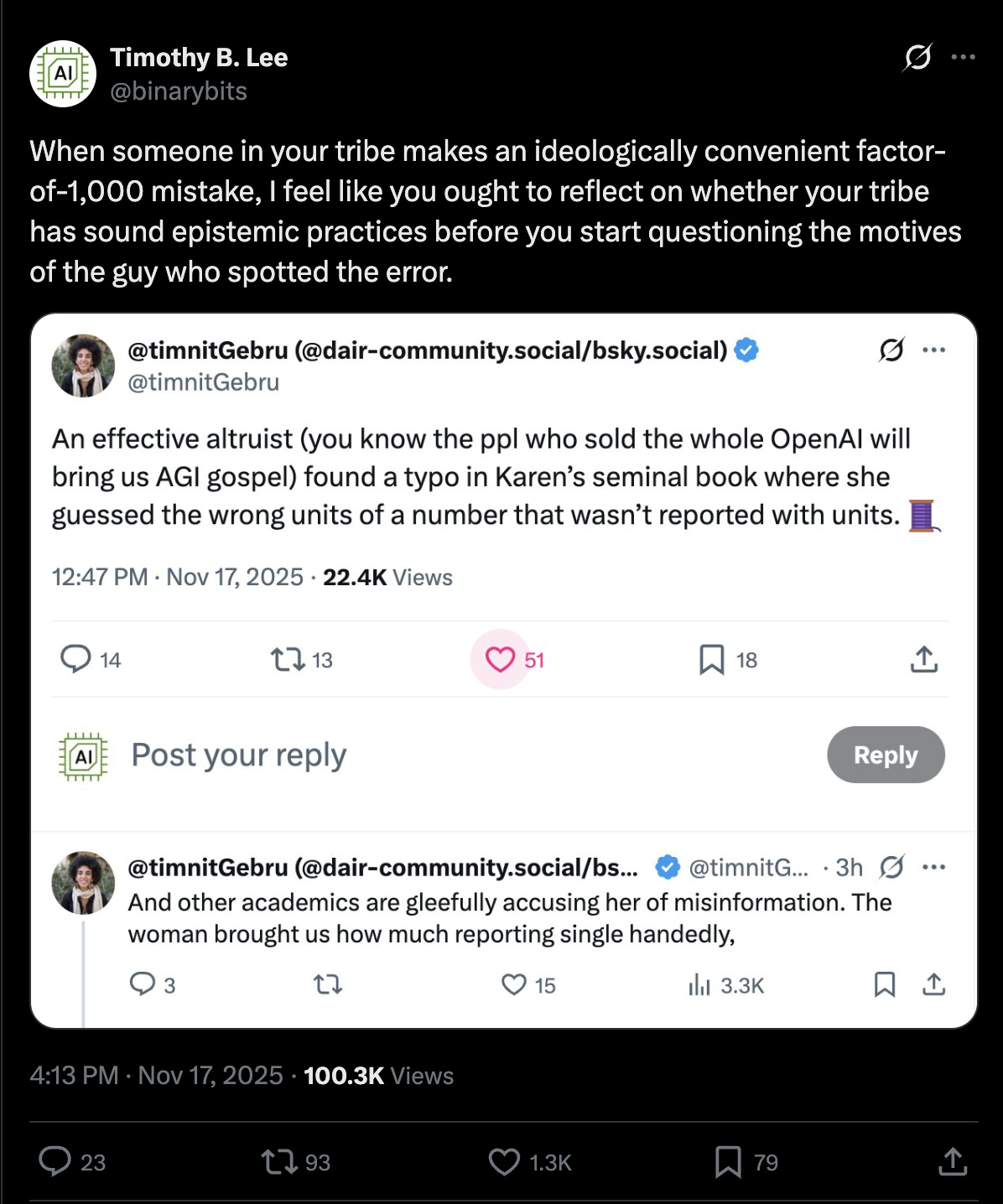

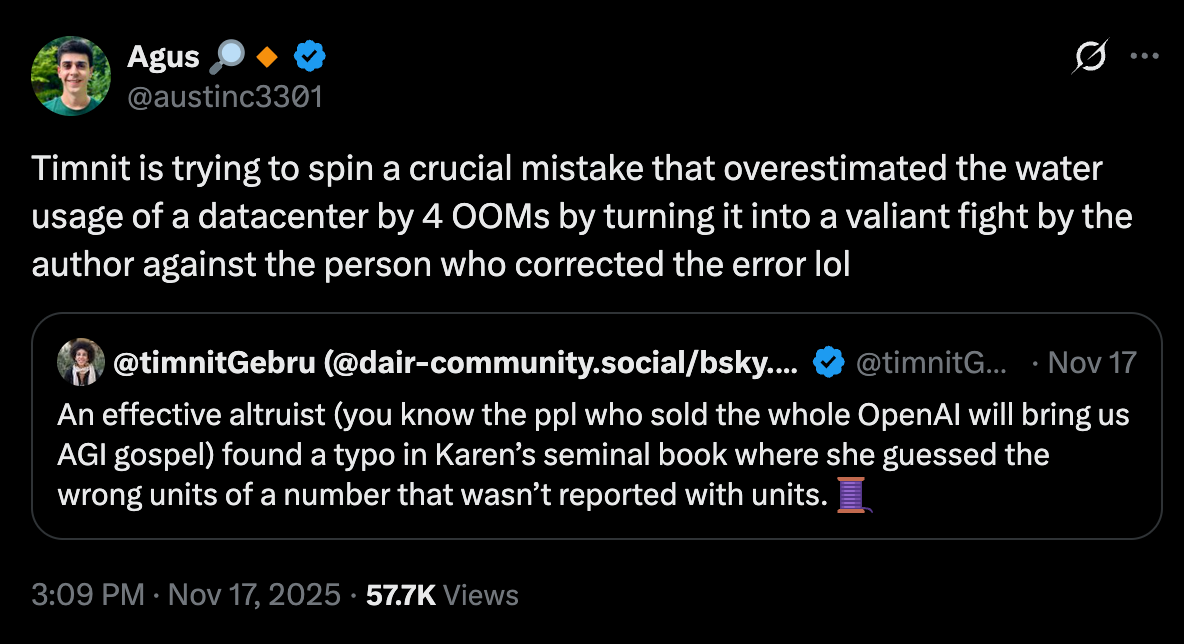

Many people on social media weighed in on the debacle. Timnit Gebru, who I collaborated with on a 2024 paper introducing the TESCREAL framework, posted a number of tweets responding to the snafu. One of these was retweeted by numerous people who found her comments problematic. Here are a few examples:

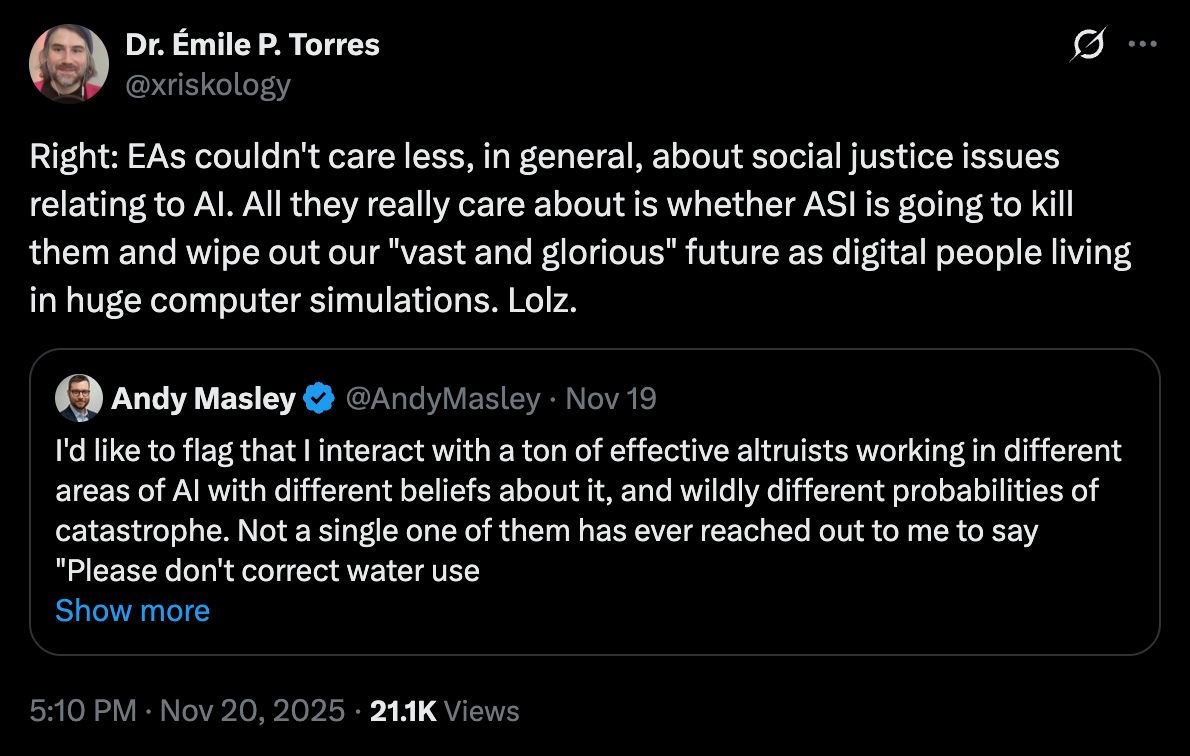

Some of the voices expressing disapproval of Gebru’s response (e.g., Angus, above) are Effective Altruists (EAs). The guy who first uncovered the error in Hao’s book, Andy Masley, is the director of Effective Altruism DC. In general, EAs don’t much care about the social justice issues surrounding AI, and I’ve noticed a pattern of them trying to downplay these issues, including AI’s water use. Their focus instead tends to be more longtermist, concerning the speculative possibility of “misaligned” superintelligence exterminating humanity, thus foreclosing our “vast and glorious” posthuman future among the stars.

Given the hoopla, I thought it might be worth briefly revisiting how EAs themselves have handled criticisms of their own community and worldview. This is not a “whataboutism” argument against those who complained about Gebru. Rather, it’s to point out just how tribal and ideologically close-minded folks in the EA cult often are, and hence how hypocritical it is for them to complain about how badly AI ethics luminaries like Gebru are handling the criticisms leveled against Hao.

What We Owe Our Critics

One of the most egregious errors in a major publication from a leading EA comes from Will MacAskill’s What We Owe the Future (2022). No, I’m not talking about his dismissal of the Repugnant Conclusion, or his suggestion that our systematic obliteration of the biosphere may be net positive because it means less wild-animal suffering. I’m referring to his claim that “it’s hard to see how” 7 to 10 degrees C of global warming “could lead directly to civilisational collapse.” He proceeds to assert that, while “climatic instability is generally bad for agriculture,” his “best guess” is that “even with fifteen degrees of warming, the heat would not pass lethal limits for crops in most regions.”

For a 2022 article published by the venerable Bulletin of the Atomic Scientists, I asked numerous climate experts about MacAskill’s claim. Every single one, unsurprisingly, told me that it’s outrageous and completely unsupported by the best available evidence. No, global agriculture would not survive just fine if warming reached 15 C above pre-industrial levels (!!). I also noted in my article that, upon contacting most of the climate experts that MacAskill thanked in the acknowledgments section, some claimed that they never even interacted with him or his team of researchers, and hence that including their name was inappropriate since it suggested that his book was scientifically vetted more than it actually was. As I write:

“There is a mistake. I do not know MacAskill,” replied one of the scientists.

“This comes as something of a surprise to me, because I didn’t consult with him about this issue, nor in fact had I heard of it before,” wrote another.

“I was contacted by MacAskill’s team to review their section on climate change, though unfortunately I did not have time to do so. Therefore, I did not participate in the book or in checking any of the content,” a third scientist told me.

Sigh.

Threats and Harassment

How did the EA community react to my article? Did they readily acknowledge that MacAskill made erroneous, unsubstantiated claims in his book? Did MacAskill publicly commit to removing his comments about climate change along with some of the names in the acknowledgments section? No. To the contrary, one noted EA, a close collaborator of MacAskill’s, literally harassed me and three other people who I’d worked with on my article by sending them a barrage of emails. I was simultaneously attacked on the EA Forum — the main online hub of the community — and the day after my article was published I found an anonymous email in my inbox that said: “Get psychiatric assistance before it’s too late, buddy.” Before it’s too late? What is that supposed to mean?

All of this came after I’d already received numerous threats of physical violence and a great deal of online harassment from EAs — for doing nothing more than critiquing their ideology and community in outlets like Salon and Truthdig. One EA sent me a DM that read: “Better be careful or an EA superhero will break your kneecaps.” Another person echoed this on Twitter, adding “I wish you remained unborn.” Numerous people on the EA Forum argued that I have a personality disorder and/or suffer from episodes of psychosis (!!), during which I write my articles criticizing EA. Hence, if they could predict when I’m going to have an episode, they can better prepare for my articles. WTF.

In late 2022 and 2023, dozens of anonymous accounts appeared on Twitter for the sole purpose of harassing me and my friends/colleagues. I.e., the only thing these accounts tweeted about was me and my criticisms. One account, named “Evíle P. Trolles,” tried to impersonate me with the handle @xriskologist (my twitter handle is @xriskology). Two other accounts posted defamatory articles (that is, articles making factually false claims about me), one of which was later deleted by the author; the other appeared on the EA Forum. I also received an email threatening to dox me, and another that referenced a short film about a murder-suicide, saying: “I hope it will take something far less extreme than what happens in the film to make you look at the kind of person you’re becoming,” by which they meant a critic of the TESCREAL cult.

Most of this happened when I was living in Germany. My routine at the time was to work at my office until 12am (I’m a workaholic, for better or worse!). Fearing for my safety, I changed my route home. When I mentioned some of these threats on social media, someone I’ll call “Tim” contacted me to say that I should take them seriously (which I already was). Why? Because Tim had attended an “AI safety” workshop in Berkeley in which violence was discussed as a strategy for ensuring that humanity attains its cosmic potential. Here’s what I wrote in a Truthdig article about this:

The AI safety workshop was held in Berkeley in late 2022 and initially funded by the FTX Future Fund, established by Sam Bankman-Fried, the TESCREAList who appears to have committed “one of the biggest financial frauds in American history.” Berkeley is the home town of Yudkowsky’s own Machine Intelligence Research Institute (MIRI), where one of the workshop organizers was subsequently employed. Although MIRI was not directly involved in the workshop, Yudkowsky reportedly attended a workshop afterparty.

Under the heading “produce aligned AI before unaligned [AI] kills everyone,” the meeting minutes indicate that someone suggested the following: “Solution: be Ted Kaczynski.” Later on, someone proposed the “strategy” of “start building bombs from your cabin in Montana,” where Kaczynski conducted his campaign of domestic terrorism, “and mail them to DeepMind and OpenAI lol.” This was followed a few sentences later by, “Strategy: We kill all AI researchers.”

Participants noted that if such proposals were enacted, they could be “harmful for AI governance,” presumably because of the reputational damage they might cause to the AI safety community. But they also implied that if all AI researchers are killed, this could mean that AGI doesn’t get built. And foregoing AGI, if properly aligned, would mean that we “lose a lot of potential value of good things.”

Not the Only One

I’m not the only one who’s feared for their safety simply because they dared to criticized the EA community. As I also noted in Truthdig:

Simon Knutsson … wrote in 2019 that he had become concerned about his safety, adding that he’s “most concerned about someone who finds it extremely important that there will be vast amounts of positive value in the future and who believes I stand in the way of that,” a reference to the TESCREAL vision of astronomical future value. He continues:

Among some in EA and existential risk circles, my impression is that there is an unusual tendency to think that killing and violence can be morally right in various situations, and the people I have met and the statements I have seen in these circles appearing to be reasons for concern are more of a principled, dedicated, goal-oriented, chilling, analytical kind.

Knutsson then remarks that “if I would do even more serious background research and start acting like some investigative journalist, that would perhaps increase the risk.” This stands out to me because I have done some investigative journalism. In addition to being noisy about the dangers of TESCREALism, I was the one who stumbled upon Bostrom’s email from 1996 in which he declared that “Blacks are more stupid than whites” and then used the N-word.

In early 2023, a group of about 10 people in the EA community, calling themselves “ConcernedEAs,” published an anonymous critique of the movement. Why did they publish this anonymously? Partly because they’d seen how the EA community treated me: with an avalanche of harassment, threats, and anonymous emails. Here’s what “ConcernedEAs” wrote in their post:

Experience indicates that it is likely many EAs will agree with significant proportions of what we say, but have not said as much publicly due to the significant risk doing so would pose to their careers, access to EA spaces, and likelihood of ever getting funded again.

Naturally the above considerations also apply to us: we are anonymous for a reason.

In other words, if you criticize the community, you will be punished in one way or another. EAs like the criticisms that they like, so to speak, but if you criticize core features of their ideology, you’d better watch out. For all their talk of “epistemic humility” and seeking out “crucial considerations,” they can be quite vicious. Many are extremely confident that EA is literally “saving the world” (a phrase that folks like MacAskill have actually used), and hence it’s not a mere peccadillo to impede their good work aimed at bettering the world. To the contrary, doing so makes you an enemy of the good.

Conclusion

I could say a lot more about the mistreatment I received. What I mention above is about 10% of what I dealt with. The point is that no one should look to the EA community as exemplifying good habits of epistemic and moral conduct. My experience, and the experience of other critics, reveals a group of entitled, arrogant individuals, driven by a messianic sense of moral self-importance, with a low tolerance for any criticisms that target key pillars of their “altruistic” ideology.

But what do you think? What am I missing? Am I wrong? As always:

Thanks so much for reading and I’ll see you on the other side!

Oh Brother don't I know it... A absolute vipers nest of scoundrels.

>They have published in outlets like The Washington Post, Aeon, and Los Angeles Review of Books.

They?

A free tool such as Grammarly can help you avoid errors like this.