Utter Madness ...

On why I won't join hands with "anti-AGI" pro-extinctionists, and why I think the AGI race is extremely dangerous even though we're nowhere close to building AGI. (1,800 words)

People on social media have repeatedly asked me two questions:

Why are you (they say to me) so harshly critical of Eliezer Yudkowsky? Why not join him in warning about the dangers of AGI and calling for an international treaty to stop the AGI race? It’s counterproductive to target someone with such a big public profile! You should be working with rather than against him!

Wait, you say that the AGI race should be stopped immediately and that it’s incredibly dangerous, yet you also say that we’re nowhere close to building AGI. How does that make sense?

Taking these in order, a reminder that Yudkowsky has made remarks like these:

If sacrificing all of humanity were the only way, and a reliable way, to get … god-like things out there — superintelligences who still care about each other, who are still aware of the world and having fun — I would ultimately make that trade-off.

Yudkowsky emphasizes that this “isn’t the trade-off we are face with” right now. But if it were, he’d willingly sacrifice our species to see artificial super-beings flitting about the universe “having fun.”

Think for a moment about how utterly outrageous this is. He’ saying that he’d be willing to sacrifice all Chinese people, Indian people, South Africans, Norwegians, everyone in Nigeria, Chile, Mongolia, and Japan, the entire populations of Toronto, Bangkok, Moscow, and London, if doing so were the “only” and a “reliable” way of creating fun-having digital space brains.

That is absolutely abhorrent. It’s atrocious. Imagine saying that you’d be willing to sacrifice my life to realize your dream: a big mansion in Hollywood. Everyone would agree that saying this would be completely unacceptable. Now imagine a TESCREAList, like Yudkowsky, saying they’d sacrifice my life, the lives of my family, and the lives of everyone else on Earth to realize their dream of a cosmic utopia full of digital space brains. I have no words to express how horrendous this is.

My friend Mark Gubrud, who often disagrees with me, wrote this:

(Note that Mark is the person who first coined the term “artificial general intelligence” back in 1997. Shane Legg then seems to have independently coined it in the mid-2000s.)

My response didn’t beat around the bush. “Not sure how else to put this,” I wrote,

there are no doubt many things that 1940s Nazis and I would have agreed about re: the environment. Does that mean I should have locked arms with them in fighting to preserve the natural world? No, absolutely not. Never. You might say this isn’t analogous with the case of Yudkowsky — and I would agree, because what Yudkowsky has said out-loud, in public, is far worse than anything fascists have said. Those fascists were eugenicists who wanted to eliminate specific social groups for the greater good. Yudkowsky is a eugenicist who’s explicitly said that he’d sacrifice literally everyone on Earth to realize his incredibly dumb version of utopia. … What. The. Fuck. What he’s saying here is absolutely fucking insane. It’s among the most extreme, horrific things I’ve ever heard anyone ever say — ever. It’s worse than anything that’s come out of the mouth of Donald Trump, Steve Bannon, or Stephen Miller. Does this make sense now? I want absolutely nothing to do with people who hold fucking insane, incredibly dangers views like those he’s repeatedly expressed.

(Sorry for the vulgarities, but I think they’re warranted in the face of omnicidal threats.)

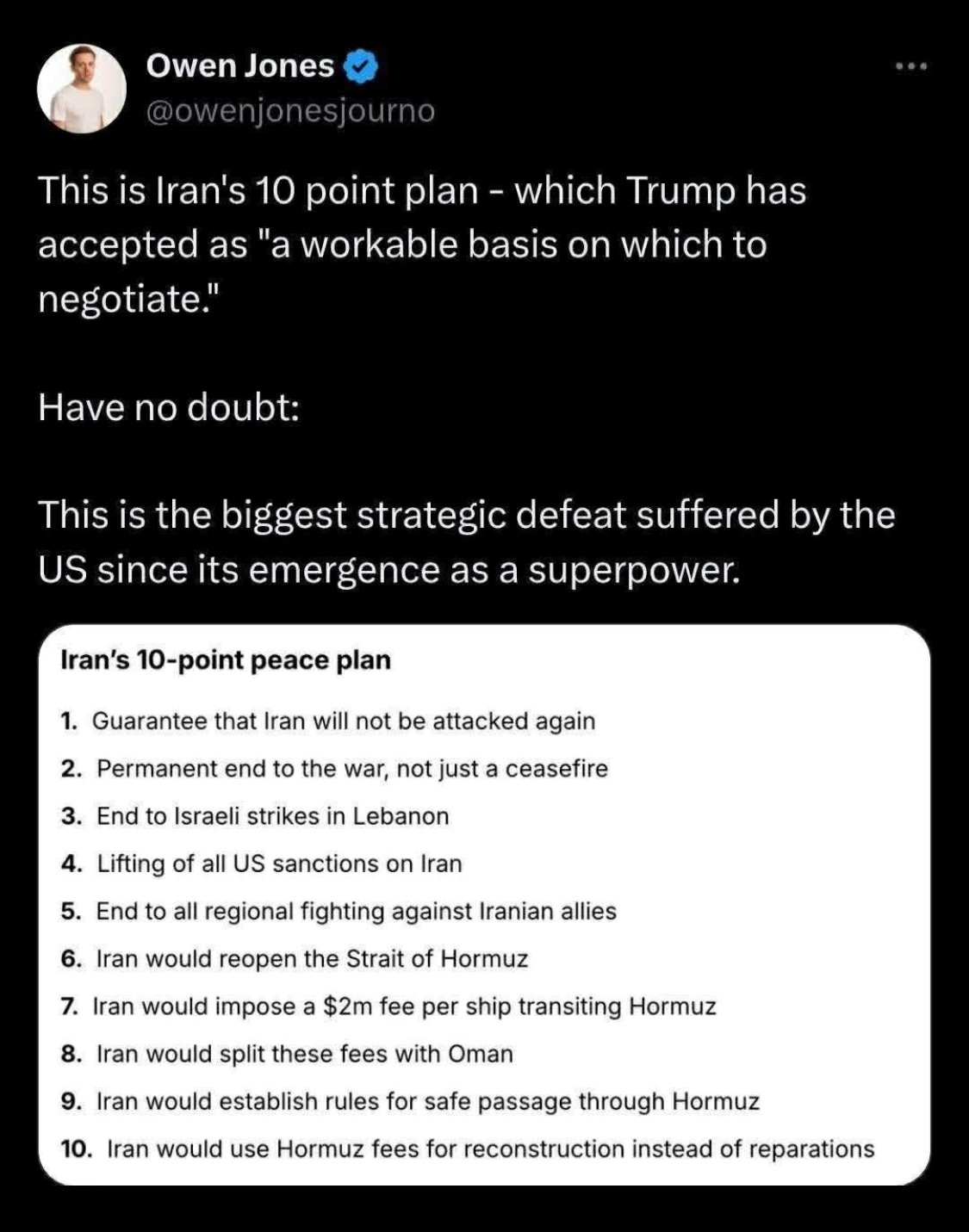

Here’s a similar example: as you’re all aware, Donald Trump recently said that “a whole civilisation will die tonight, never to be brought back again. I don’t want that to happen, but it probably will.” He then backed off an has apparently reached an agreement according to which Iran gets pretty much everything they wanted (lolz):

The fact that Trump didn’t commit genocidal war crimes against Iran doesn’t for one moment excuse his words. Yet, what Trump said is not as bad as what Yudkowsky has said, and we must acknowledge that. Trump is basically declaring that he’d sacrifice Iran for some obscene notion of the greater good. Yudkowsky is saying he’d sacrifice humanity for some bizarre notion of the greater cosmic good. What is omnicide other than all possible genocides put together?

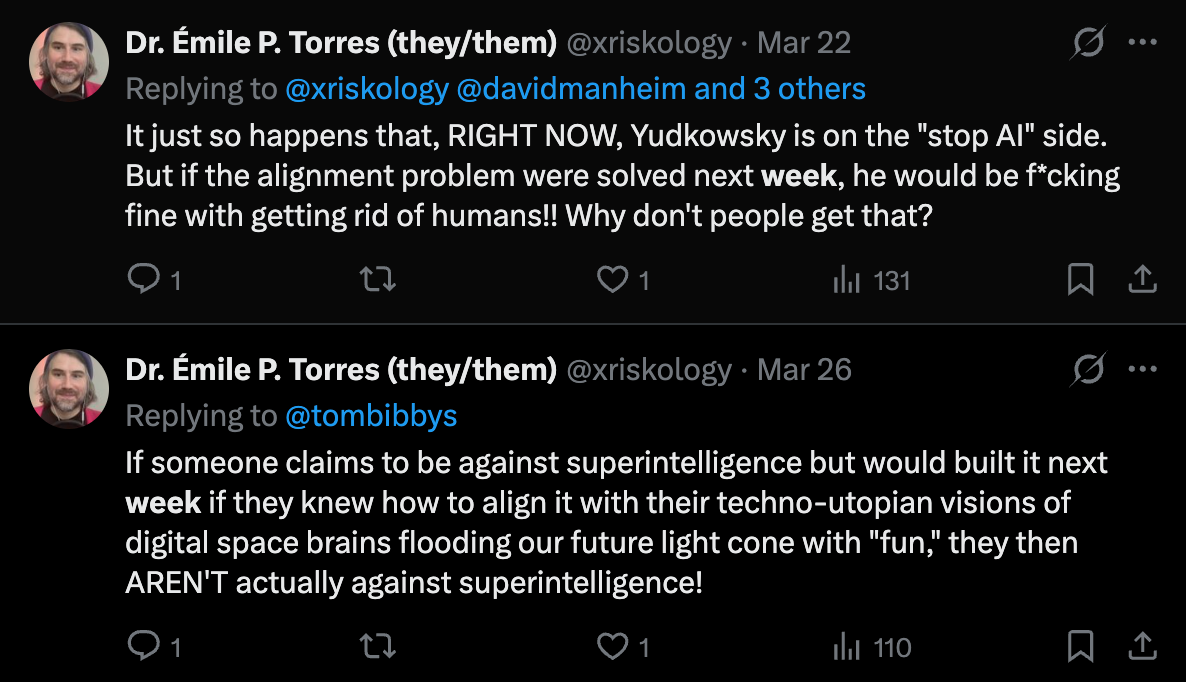

Furthermore, as I wrote here, Yudkowsky isn’t actually anti-AGI. He’d build AGI literally next week if he thought it was “value-aligned,” meaning aligned with the utopian values of the TESCREAL worldview (cosmic paradise, digital space brains, and all that). He’s not on Team Human, he’s on Team Posthuman.

What I want is to join forces with folks on Team Human who actually do think we should never build AGI. If the alignment problem were solved next week, I’d still oppose AGI. (I provide a detailed argument in my book for why AGI would almost certainly have catastrophic consequences if built, no matter what, which makes me very anti-AGI in an unconditional way.)

***

As for the second question, it might be worth noting that there are at least four main reasons one might think AGI won’t arrive in the near future. These are:

The systems that power current AI, large language models (LLMs), aren’t going to get us to AGI by themselves. No matter how much AI companies scale them up by increasing compute (computational resources), training data, and their parameters, they just don’t have the right architecture to become AGI. LLMs by themselves are a dead end, though there might be other systems, architectures, and approaches that could eventually get us there.

AGI isn’t a technology that we could ever build. It might simply be too difficult for our species. This is made plausible if one believes there may be other technologies that are in theory possible but in practice out of our reach, such as spacecraft that travel at 99.99% the speed of light. Perhaps some kind of super-clever alien species could figure this out, but we probably never will.

AGI, and especially ASI (artificial superintelligence), isn’t possible to build in theory. It’s like a perpetual motion machine, or designing a spacecraft that can exceed the speed of light. There’s just no way to build an artificial system that surpasses human capabilities in every cognitive domain of interest, perhaps because we represent the highest level of “intelligence” attainable.

AGI and ASI are not even coherent ideas to begin with. What does it mean to build an “everything machine” that can “exceed” humans in every domain of interest? Heck, AI companies and researchers can’t even agree upon a definition of “AGI” — OpenAI itself proposes multiple inconsistent definitions on its own website! What, then, are we even talking about?

I very much agree with (1), and am sympathetic with (4). However, I also find (2) appealing for the following reason: it could be that a certain degree of societal, political, economic, etc. stability is necessary to build AGI. However, the stepping-stone systems that we’d need to build in order to reach AGI may wreak so much havoc that the fabric of society unravels, thus making AGI unreachable. In other words, there may be a negative feedback loop here such that the closer we get, the further away we end up: building AGI requires societal stability, but the more AI we have, the less stable things become.

On the ineptitude of AI … Shot and chaser:

A chapter in my book goes into immense detail about all the harms caused by current AI systems, which the AI companies have built in hopes of scaling them up to become AGI. When one surveys all these harms catalogued in one place, over the course of 30 pages, it’s completely shocking, and forces one to admit that it’s not implausible the AGI race itself could potentially cause societies to collapse (or at least play a significant causal role in helping along the process).

This is why I call for an immediate, permanent halt to the AGI race — on scaling up generative AI systems. They have very few benefits, and threaten to undermine key civic institutions like the rule of law and free press, as well as facilitating things like domestic mass surveillance.

That’s how someone can simultaneously say that AGI isn’t around the corner and that the race to build AGI must stop immediately. To this, I’d also add that if we were to someday build AGI, I have no reason to expect it to be controllable by default. If humanity were suddenly joined by an entity that could outmaneuver us in every important respect, solve complex problems faster than any human, set and modify its own goals without human intervention, and act as an autonomous agent in the world, etc., then we’re in very big trouble.

Fortunately, we’re nowhere close to building such an entity. Unfortunately, we don’t need AGI for AI to destroy the world.

***

Speaking of my book, I now have 85,000 words written! I’m almost done, which means I’ll get back to posting two articles per week in probably less than a month. The book will end up around 100,000 words long, and my hope is that it offers a devastating and original critique of the AGI race (I don’t know of anyone else who makes the points I do).

But I’m in a bit of a pickle, as I’m struggling to find a new publisher who can get it out later this year — rather than 13 months from now, which is what my current publisher is offering. Who the hell knows what the world will look like in 13 months (lol); the topics I discuss are relevant right now!

I have a few promising leads, and I’m not fundamentally opposed to self-publishing it in the end if that’s necessary to get it out sometime in 2026. (The book will still be massively peer reviewed either way, as I send most of what I write out to many dozens of people for feedback and criticisms.) Anyways, just giving you an update. The current table of contents is:

Clown Car Utopia: Why We Must Stop AI to Save Humanity

Chapter 1: Digital Space Brains

Chapter 2: An Alphabet Soup of Ideologies

Chapter 3: The Road to Utopia

Chapter 4: Death to Humanity

Chapter 5: A Trail of Destruction

Chapter 6: Our Precious Planet

Chapter 7: Butlerian Jihadists

I honestly could not do this without your support! As always:

Thanks for reading and I’ll see you on the other side!

Woah, this article basically covers almost every thinking I have been doing about AGI. I agree with a lot of what you say. The amateur philosopher (the one who didn't read up in detail about Yudkowski, nor on the difference between AGI and ASI) in me has a few things to share.

Unless Yudkowski has somehow mathematically proven there is some kind of universal morality that supersedes anything human-wrought, I think his views as you describe them might contradict themselves. If we talk about "greater good", shouldn't it be always about the greater good for mankind? How does eliminating mankind achieve that? Whichever way you look at it, this is all thought out by and for humans and I doubt whether we can know what is good for "the rest of the universe". That'd be quite arrogant. In short, eliminating mankind would also wipe out all our values and whatever is left would literally have no meaning at all. Well that was just the logical way of looking at it. The human in me, the one that is consciously aware he is subject to all kinds of human mechanism (emotion, social values, etc), 100% agrees about the atrociousness of his view.

As for AGI, again I agree with your estimate where we likely stand. And also with the dangers it poses. But I fear that the Djinn that is "the road to AGI" is out of the bottle. And I cannot conceive of a way to put it back. I can't help but think that the scenarios in which we might be able to put it back, may be too bleak to consider, even though pure logic dictate we should (compare how we deal with climate change). In that respect the future really looks bleak. Things are even bleaker when we look at the number of challenges we currently face, that shouldn't have been existential, but still are due to human stupidity: neglecting vulnerabilities of OpenClaw like architectures, sloppy software engineering practices in the very company that claims to be on the forefront of alignment science (Anthropic), government systems of powerful countries that don't have guardrails against lunatics with genocidal tendencies, BigTech that lie there asses off in the name of profit, climate change, and the list goes on. All problems that are theoretically much easier to solve than the existential problems caused by AGI/ASI.

Despite all of the above, I entertain a view of which I am still pondering whether it is naive (it probably is). AGI is dangerous for exactly the reasons you name. The alignment problem is mathematically unsolvable. The Djinn cannot be put back. So where does that put us? Well, imho, with the only skill humanity has time and time again shown that they can perform near miracles with: Engineering. However small the chances that Engineering will save us, it is the only option we have, so we MUST take it. Engineering what? Well a good enough solution to the alignment problem: heuristics, patches, guardrails, etc etc and probably a huge amount of luck. Again, it is not much to go on, but it is all we have. So we better get going and not only pray for, but build that miracle.

Congrats on the book... A year is a very long turnaround time maybe you could split the language editions or keep the rights for English and self publish but its very long...

projectallende@gmail.com