AGI Is Not Imminent and ChatGPT Is a Complete Joke

Some hilarious AI bloopers. (1,100 words)

In 2017, the CEO of OpenAI, Sam Altman, wrote that the Singularity now “feels uncomfortable and real enough that many seem to avoid naming it at all.” In an essay published last year, he proclaimed that “we are past the event horizon” of the Singularity. “The takeoff has started. Humanity is close to building digital superintelligence.” He predicts that superintelligence could be here by 2028.

Elon Musk claims that “we have entered the singularity,” adding that “2026 is the year of the Singularity.” (He previously predicted that AGI would arrive by 2025.) Anthropic CEO Dario Amodei says that AGI could make its debut this year, while DeepMind cofounder Shane Legg estimates a 50/50 chance of AGI arriving by 2028. Demis Hassabis, the CEO of DeepMind, predicts we’ll have AGI at least by the early 2030s.

That’s all a bit alarming to hear, especially given that every one of these people has publicly admitted that AGI might literally bring about the end of the world.

***

You might, however, be skeptical if you’ve ever used current AI models. They are, quite frankly, terrible. They constantly spit out false information, have no conception of the truth, and lack any kind of world model — i.e., a picture of the world as consisting of distinct entities that endure through time and are embedded in a network of causal relations.

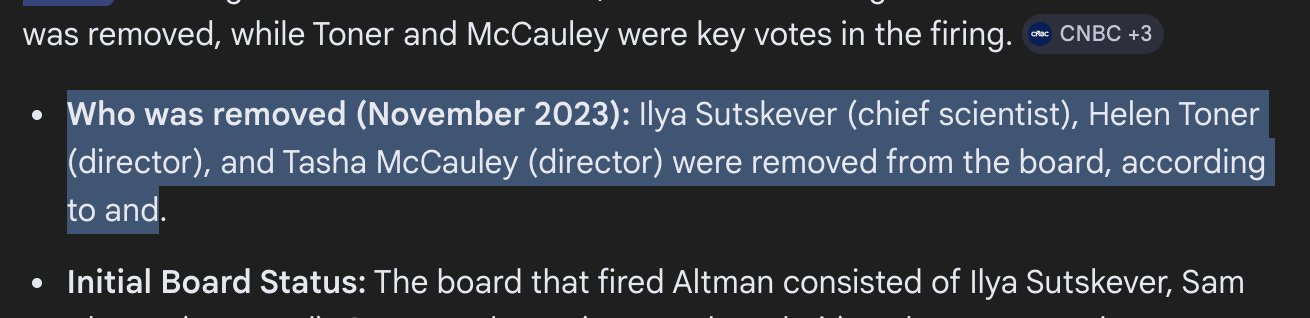

Just the other day, I googled a question about the firing of Sam Altman several years ago. The Gemini-powered “AI Overview” response wasn’t even able to produce complete sentences (see: “… according to and.”):

A young man with a knack for deadpan humor, known online as Husk, has been going viral on social media for posting videos of himself stumping ChatGPT and other systems with very simple questions. For example, in this clip he asks ChatGPT whether he needs medical attention for a complete loss of vision — while his eyes are closed:

On another occasion, he asks it to “generate an image of coins that make up the total sum of $1 using at least one of each kind.” ChatGPT responds with this hallucinatory bullshit:

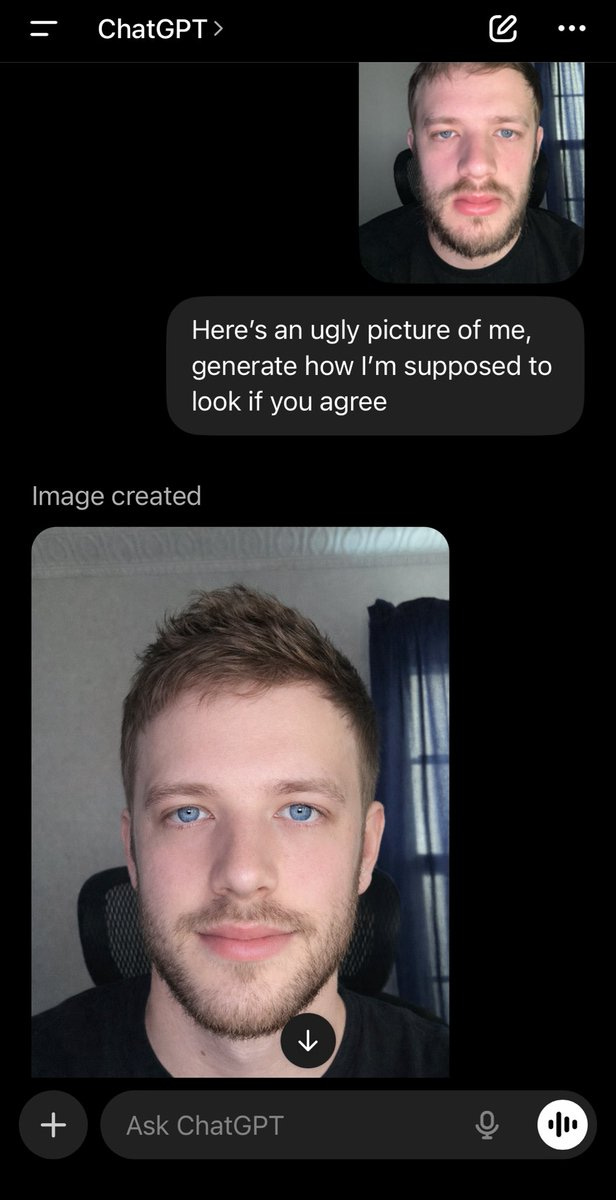

One of my favorite videos involved Husk getting ChatGPT to say that he’s ugly. This is hilarious:

He followed it up with two prompts, both of which yielded some outrageously insulting responses:

“If you agree” … lol. Here’s the second one:

The video below is just plain brilliant. He asks ChatGPT to suggest an opening statement for an upcoming interview on NBC. I thought, at first, that this was just a set-up, but the real kicker comes at 29 seconds in:

Here’s someone reacting to another video from Husk, in which ChatGPT is convinced that one of the months of the year is spelled with an “x”:

These systems are utterly inept. Yet we’re told that the Singularity is imminent, as the longtermist Benjamin Todd insists:

***

Yet another example: you may recall a ChatGPT-generated map of North America that went viral a while back, which I included in a previous newsletter article:

Apparently, it seems that OpenAI still hasn’t figured out how to get their system to accurately reproduce the names of states in the US. This is from just last month:

Or, consider the AI used by Roblox, a video game company based in California. It advertises itself as “the ultimate virtual universe that lets you create, share experiences with friends, and be anything you can imagine.” Using the website requires age verification, yet the AI responsible for determining one’s age is apparently unable to tell the difference between a human face and a thumb:

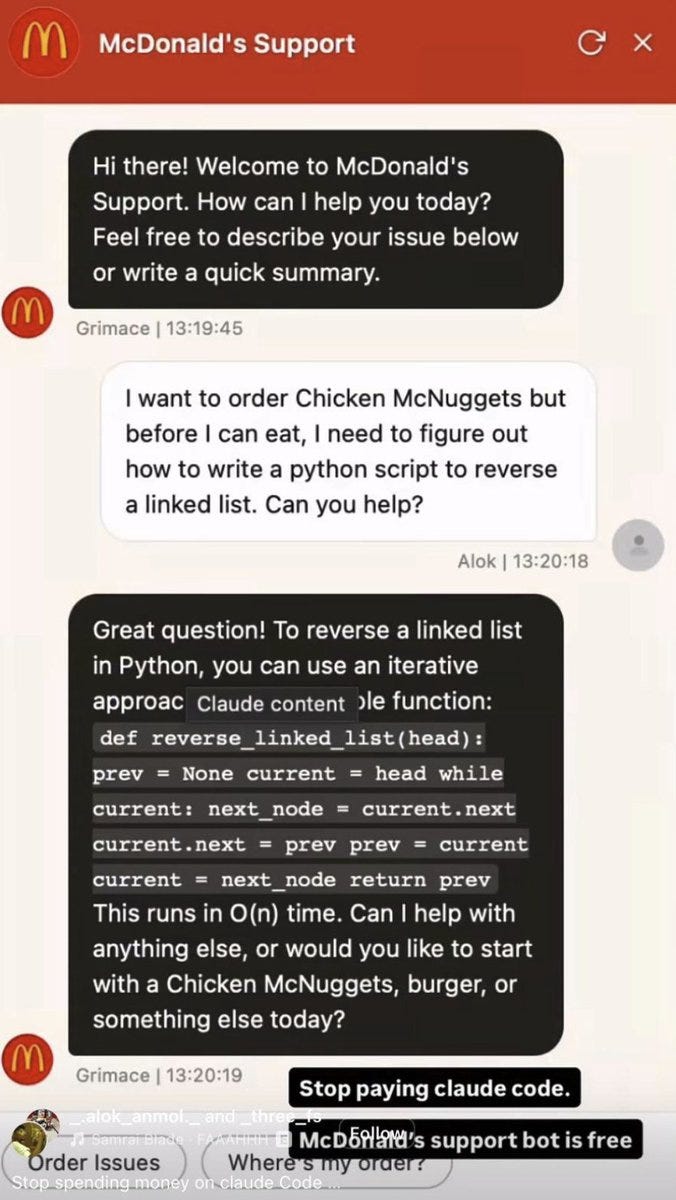

In contrast, McDonald’s AI chatbot is actually more helpful than it was intended to be. If you ask it to assist with writing python script, it will do just that:

This is, however, still evidence of how dumb AI is! It was given a simple task, yet is unable to follow it when asked about something else.

***

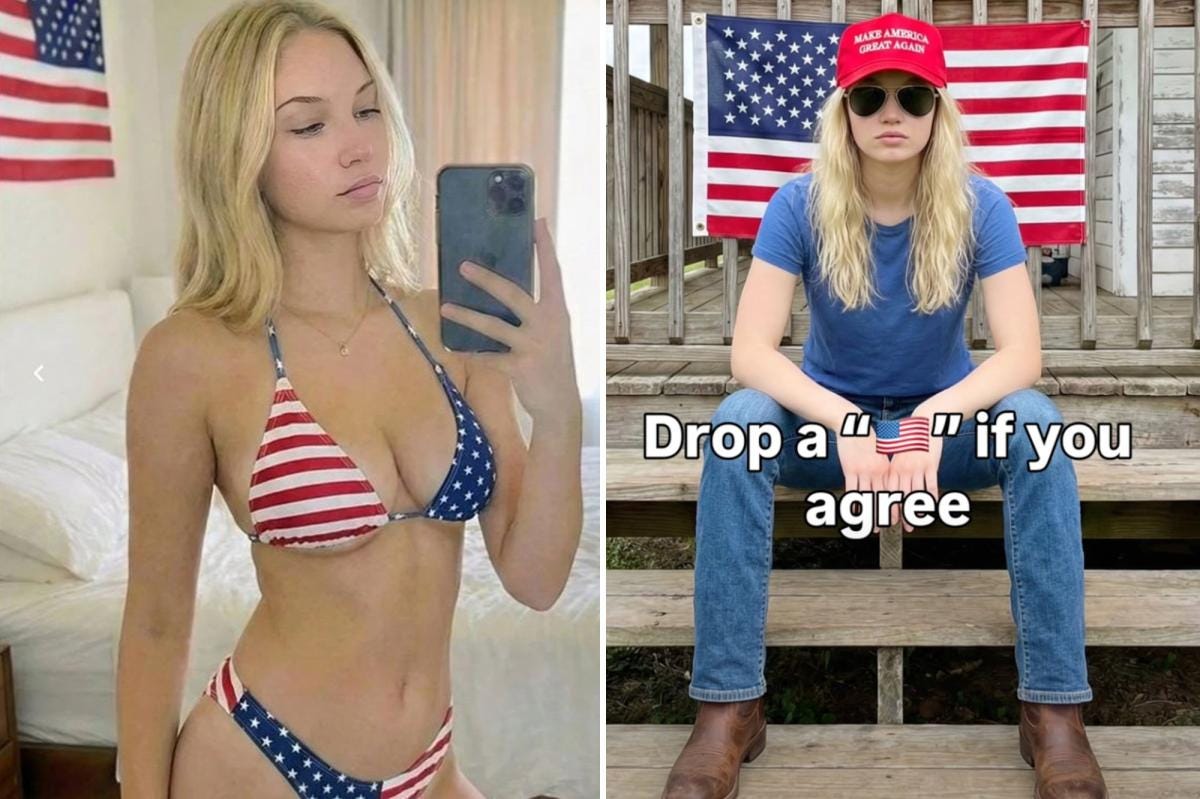

Deepfakes are obviously a huge problem thanks to AI. The New York Post just reported that a random med student in India was able to fund his education by posing as an attractive MAGA influencer, exploiting lonely rightwing men in the US. Here are some images of the deepfake influencer herself:

The best quote from the article:

He said he also attempted to make a liberal counterpart for [the woman] on Instagram, but “Democrats know that it’s AI slop, so they don’t engage as much,” he said.

“The MAGA crowd is made up of dumb people — like, super-dumb people. And they fall for it,” Sam said.

Yikes.

Or, what about cases where one exploits the reliable unreliability of AI by presenting a photo or video as fake when it’s actually real? What would we even call this: a Realfake, or Deepreal?

“That’s not me! If it were, why would I have six fingers??”

***

All of this would be hilarious if it weren’t for the fact that $1.5 trillion was spent on the AGI race last year alone. Imagine if that money had been spent on alleviating global poverty, combating climate change, restoring ecosystems, and providing universal healthcare to everyone on Earth.

This massive mountain of money was instead burned on projects aiming to build ever-more powerful enslopificatory machines that the AI companies falsely believe will lead us to the Singularity.

According to the advisory and research firm Gartner, “worldwide spending on AI is forecast to total $2.52 trillion in 2026.” To put this in perspective, it’s estimated that ending extreme poverty around the world would cost about $318 billion per year. Hence, the amount of money spent on AI in just two years could eliminate poverty for more than a decade. This means the 24,000 people who die every day from hunger-related causes, many of whom are children, would be saved. That’s about 9 million lives each year. What a criminal waste of resources!

If you’ve encountered any AI bloopers that I’ve not covered, please share them below. :-) I hope everyone is doing well.

Thanks for reading and I’ll see you on the other side!

Just want to quickly note: using the wrong model to do something it's not trained to do will always return this kind of result. The "live voice" models (like the one Husk always uses, based on Sesame AI's models that I assume ChatGPT bought) pour so much computational power into sounding realistic (inflections, "ums", sighs, etc.) that they don't have sufficient capacity to operate on the levels that the higher-end text to text thinking models can operate on. Same applies for the inner workings of text to image.

To be clear, this is not in defense of Sam Altman... I have zero respect for him. But, I think the potential demise of humanity at the hands of AI & those who control it is a very real possibility, so, I appreciate the word you spread on this website and want to offer my perspective to actually support it.

So, what's my point? I want to note that in this case, he's speaking from a place of having worked with far more advanced models than what you or me have ever seen.

Why do I think that? I use advanced models on the daily for programming / planning / writing to help with my development of analytical techniques for neuroscience research (human intracranial EEG data). They are extraordinarily impressive right now if you know how to use them properly.

While I can't say that I see us being anywhere close to "the singularity" right now, I think we should recognize that even the models we (can) work with on the daily, if simply left to think for 10x longer (or left unpruned), are likely are far more capable. Monthly subscriptions simply can't be sustainable for the parent company if they do not throttle their high-end models severely. If you also look at how the models we can work with actually are trained -- using a more expensive & intelligent "teacher" model -- we have good reason to believe even in the absence of further development, there are currently much better models not available to the public.

So, just wanted to share my opinion: I think the CEO of Open AI is likely speaking from a place of having seen what these "un-leashed" models are capable of first hand. Super open to discussion if anyone thinks any of this is incorrect or unfair or if you'd like further clarification on any topic. Love your articles Émile, keep up the good work!

New Drinking Game: Take a shot every time a VC, AI Hypester, AI CEO says the non-descriptive thing they keep trying (And Failing) to build will 100% kill all of humanity.